Want to learn how to use your Voice Kit to control other IoT devices? You can start here with a Particle Photon (a Wi-Fi development kit for IoT projects) and Dialogflow (a tool for creating conversational interfaces). This tutorial will show how to make your Voice Kit communicate with Dialogflow (and Actions on Google) to control an LED light with the Photon by voice.

Get all the code for this example here.

What's included

This example ties together multiple technology platforms, so there are a few separate components included in this repo:

dialogflow-agent - an agent for Dialogflowdialogflow-webhook - a web app to parse and react to the Dialogflow agent's webhookparticle-photon - a Photon app to handle web requests, and to turn the light on and off

We've included two separate web app implementations. Choose (and build on) the one that best suits your preferences:

This should be enough to get you started and on to building great things!

What you'll need

We’ll build our web app with Node.js, and will rely on some libraries to make life easier:

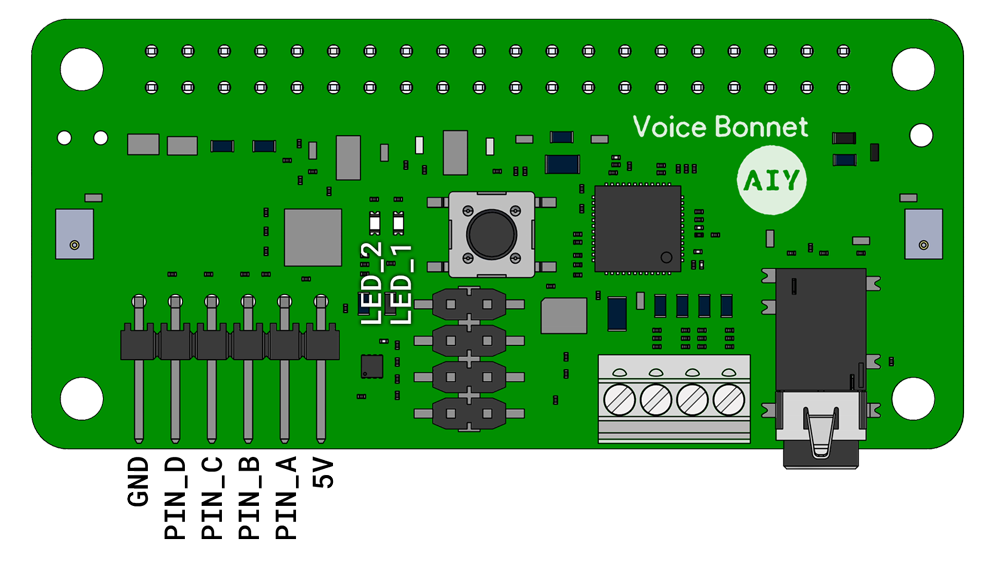

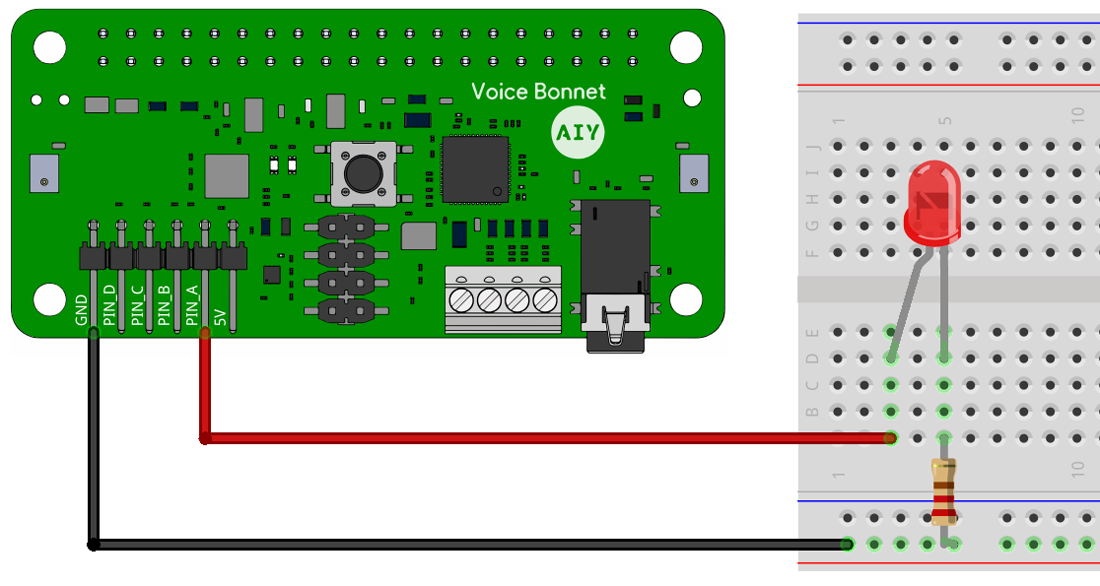

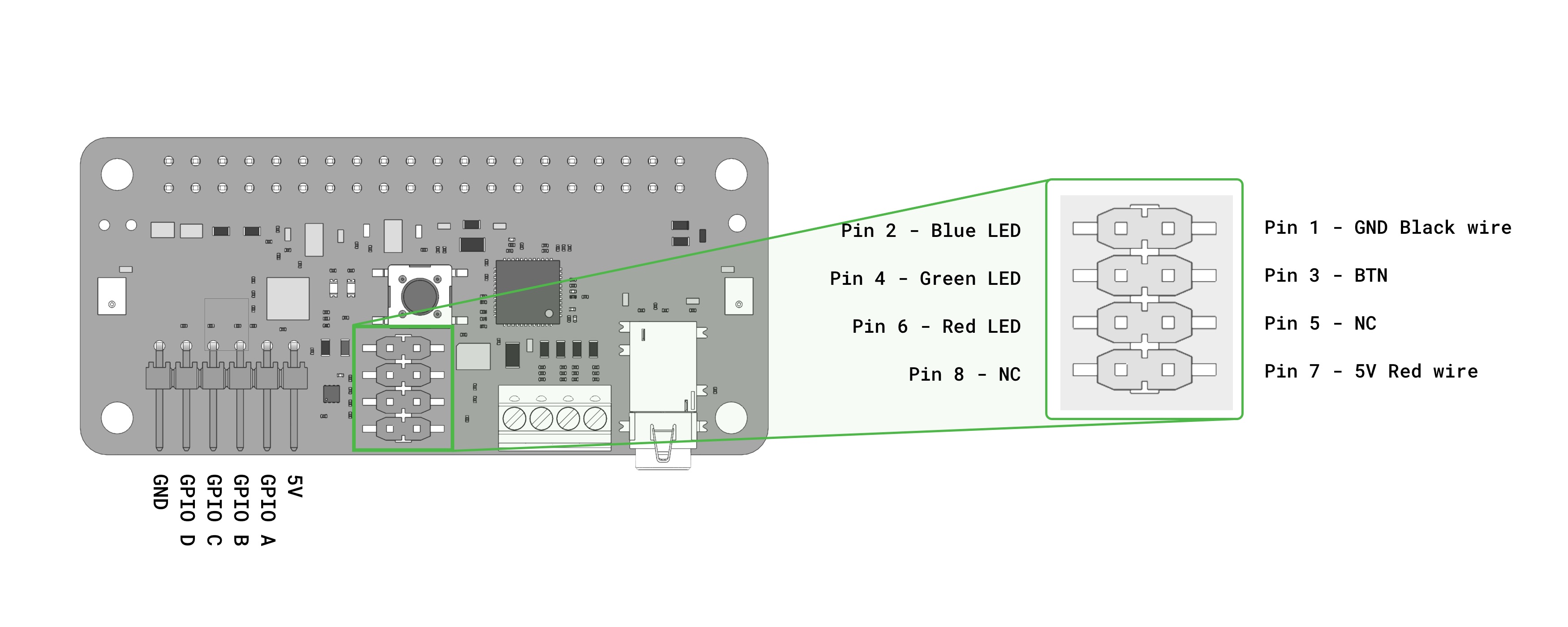

On the hardware side, you will need:

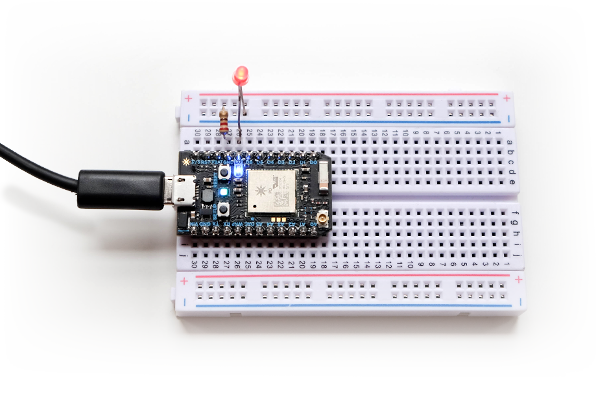

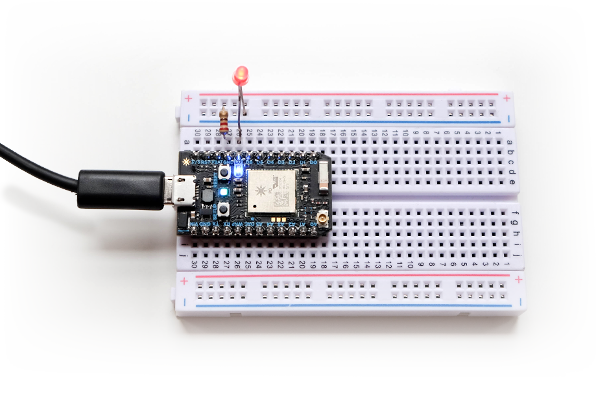

It's handy to have a breadboard, some hookup wire, and a bright LED, and the examples will show those in action. However, the Photon has an addressable LED built in, so you can use just the Photon itself to test all the code presented here if you prefer.

You'll also need accounts with:

- Dialogflow (for understanding user voice queries)

- Google Cloud (for hosting the webhook webapp/service)

- Particle Cloud (for deploying your Photon code and communicating with the Particle API)

If you're just starting out, or if you're already comfortable with a microservices approach, you can use the 1-firebase-functions example — it's easy to configure and requires no other infrastructure setup. If you'd prefer to run it on a full server environment, or if you plan to build out a larger application from this, use the 2-app-engine example (which can also run on any other server of your choosing).

If you've got all those (or similar services/devices) good to go, then we're ready to start!

Getting started

Assuming you have all the required devices and accounts as noted above, the first thing you'll want to do is to set up apps on the corresponding services so you can get your devices talking to each other.

Local setup

First, you'll need to clone this repo, and cd into the newly-created directory.

git clone git@github.com:google/voice-iot-maker-demo.git

cd git@github.com:google/voice-iot-maker-demo.git

You should see three directories (alongside some additional files):

dialogflow-agent - the contents of the action to deploy on Dialogflowdialogflow-webhook - a web application to parse the Google Actions/Dialogflow webhook (with server-based and cloud function options)particle-photon - sample code to flash onto the Particle Photon

Once you‘ve taken a look, we’ll move on!

Dialogflow

Using the Dialogflow account referenced above, you‘ll want to create a Dialogflow agent. We'll be setting up a webhook to handle our triggers and send web requests to the Particle API.

- Create a new agent (or click here to begin). You can name it whatever you like

- Select Create a new Google project as well

- In the Settings section (click on the gear icon next to your project name) and go to Export and Import

- Select Import from zip and upload the zip provided (

./dialogflow-agent/voice-iot-maker-demo.zip)

You've now imported the basic app shell — take a look at the new ledControl intent (viewable from the Intents tab). You can have a look there now if you're curious, or continue on to fill out the app's details.

- Head over to the Integrations tab, and click Google Assistant.

- Scroll down to the bottom, and click Update Draft

- Go back to the General tab (in Settings), and scroll down to the Google Project details.

- Click on the Google Cloud link and check out the project that's been created for you. Feel free to customize this however you like.

- Click on the Actions on Google link, and go to 2 - App information

- Click Add, and fill in the details of your project there

- Add some sample invocations, as well as a pronunciation of your Assistant app's name

- Fill out the other required fields (description, picture, contact email, etc.)

- Scroll down to the bottom, and click Test Draft

You can now test out the conversational side of the app in one of two ways:

You can also try talking to your application on any Assistant-enabled device that you‘re signed into.

However, if you’re following along step-by-step, it won't turn any lights on yet — we still have to set up the web service and the Photon app. Onward then!

Google Cloud

Depending on which hosting environment you want to use, cd into either ./dialogflow-webhook/1-firebase-functions or ./dialogflow-webhook/2-app-engine, and continue the setup instructions in that directory's README.md file.

IMPORTANT: Regardless of what hosting/deployment method you choose, make sure you return to the Dialogflow panel and go into the Fulfillment tab to update the URL field. Also, check that the DOMAINS field is set to "Enable webhook for all domains". Without doing these things, Dialogflow won't be able to talk to your new webhook.

Particle

Make sure the Photon is correctly set up and connected. (If it’s not configured yet, follow the steps in the Particle docs

You can upload your code to your photon via the Particle web editor, the Particle Desktop IDE (based on Atom), or the Particle command-line tools.

We'll be using the CLI for this example, which you can install thusly:

sudo npm i particle-cli -g

To deploy via the command line, first make sure you’re logged in:

You can find out the ID of your device by running:

Then upload the code using that ID:

particle flash [YOUR-DEVICE-ID] particle-photon/particle-blink-demo.ino

The Photon should blink rapidly while the upload is in process, and when it's done (and calmly pulsing cyan), you're ready to go.

Note: Make sure you generate a Particle access token, and add that token (along with your Photon's device id) to your config.js file.

You can make sure it all works by running the following from your terminal:

curl https://api.particle.io/v1/devices/[YOUR-DEVICE-ID]/led -d access_token=[YOUR-ACCESS-TOKEN] -d led=on

If everything is configured properly, you should see something like the following:

{

"id": "[YOUR-DEVICE-ID]",

"last_app": "",

"connected": true,

"return_value": 1

}

You should see the Photon's light come on (along with an LED on the breadboard, if you've wired one up)! Doing the same with led=off will return a 0 instead of a 1, and will (you guessed it) turn the light off.

Note: If you ever see a "return_value":-1, that's an error message — something has gone wrong somewhere.

Putting it all together

Once you’ve uploaded all the code and each service is configured, it’s time to give it all a try! You can confirm that everything went to plan by going to either your Assistant-enabled device or the Google Actions simulator, asking to talk to your app ("talk to [APP-NAME]"), and typing "turn the light on". If all goes well, your LED should turn on!

Further reading

This application is just a taste of what's possible — how far you take this framework is up to you! Here are a few resources to help you continue on your journey:

on the far right. A dialog appears where you must then click Download JSON to get the credentials in a

on the far right. A dialog appears where you must then click Download JSON to get the credentials in a